How to Make the Right Choice in Moral Dilemmas

Every so often, life puts you at a crossroads where every option feels wrong. You've seen it in movies, read it in philosophy books, and — if you're honest — lived it in your own life. A friend asks you to cover for them. A colleague corners you with a secret. A doctor must choose who gets the last ventilator. These aren't just dramatic scenarios. They are moral dilemmas — and they test the very core of who you are.

Understanding how to make the right choice in moral dilemmas isn't just an academic exercise. It shapes careers, relationships, policies, and societies. Whether you're a student navigating ethics in your coursework, an aspirant preparing for UPSC's GS Paper IV, or a professional who faces hard calls at work, this guide gives you the frameworks, the psychology, the famous examples, and the practical tools to reason clearly when the stakes are highest.

Let's start from the beginning.

1. What Is a Moral Dilemma? Definition, Origin & Key Terms

A moral dilemma is a situation in which a person must choose between two or more courses of action, each of which involves violating a moral principle or causing harm. It works by placing moral values in direct conflict — honesty vs. loyalty, individual rights vs. collective welfare, justice vs. mercy. It is significant because it forces us to confront the limits of our ethical reasoning and reveals what we truly value when everything is at stake.

The philosophical study of moral dilemmas dates back to ancient Greece. Plato's Republic poses questions about justice versus obedience. Sophocles' tragedy Antigone dramatizes a conflict between divine law and state law — Antigone must choose between burying her brother (a religious duty) and obeying the king's decree (a civic duty). Both options carry profound moral weight.

The modern academic treatment of moral dilemmas was formalized in the 20th century, particularly through the work of philosophers like Bernard Williams and Philippa Foot. Williams argued in his 1973 essay "Ethical Consistency" that genuine moral dilemmas exist — situations where, no matter what you choose, you commit a moral wrong. Foot, meanwhile, introduced thought experiments like the Trolley Problem to probe our intuitions about action, inaction, and moral responsibility.

Key terms to know:

- Moral agent: A person capable of making ethical choices

- Ethical conflict: A clash between two or more moral values

- Moral residue: The guilt or regret that lingers even after making the "best" choice in a dilemma

- Ought implies can: The principle that you can only be morally obligated to do what is actually possible

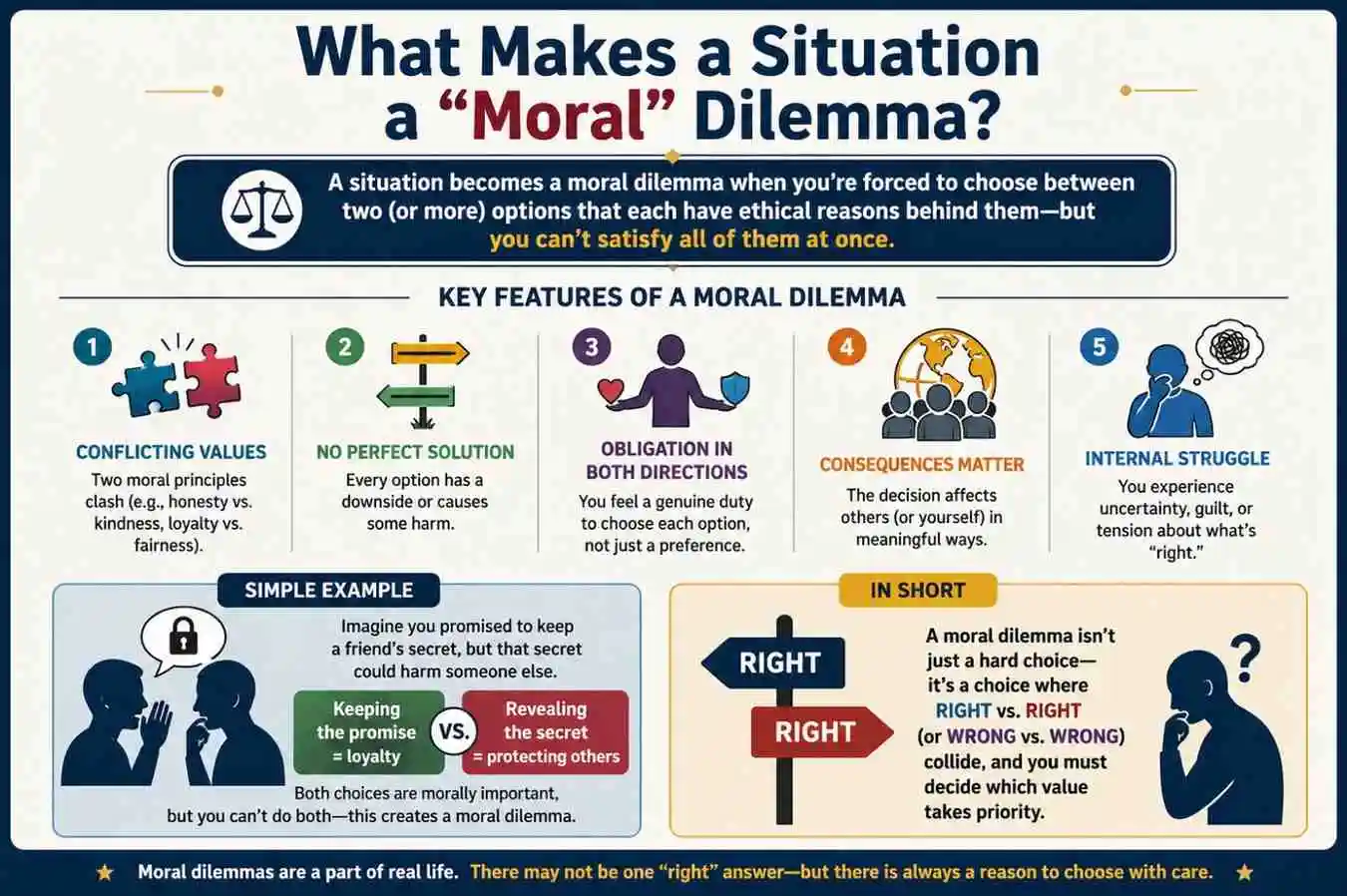

2. What Makes a Situation a "Moral" Dilemma?

Not every hard decision is a moral dilemma. Choosing between two job offers is difficult — but it's not a moral dilemma unless ethical values are at stake. A genuine moral dilemma has three defining features:

First: Genuine moral conflict. Both (or all) options involve a moral claim. One path might require you to lie; the other might cause harm. Neither is morally clean.

Second: No obvious "right" answer. If one option is clearly better by every moral standard, it's a tough decision — not a dilemma. A dilemma exists when reasonable, thoughtful people can disagree about the right path.

Third: Unavoidable moral cost. Whatever you choose, something of genuine moral value is sacrificed. You cannot walk away without having violated, compromised, or lost something that matters morally.

Bullet-point summary:

- Hard decisions ≠ moral dilemmas; moral conflict must be present

- Both options must carry moral weight

- Reasonable people can disagree on the right choice

- Every option has a moral cost — there is no "free" exit

- Moral residue (regret, guilt) is a normal and appropriate response

3. Types of Moral Dilemmas

Philosophers have identified several distinct categories:

3.1 Self-Imposed vs. World-Imposed Dilemmas

A self-imposed dilemma arises from your own past choices — you made a promise you now cannot keep without breaking another. A world-imposed dilemma is thrust upon you by external circumstances — a crisis, a role, or a sudden tragedy forces a choice you didn't invite.

3.2 Epistemic Dilemmas

These occur when the moral question is clear, but you lack the information to make the right call. A doctor uncertain about a diagnosis faces an epistemic moral dilemma — acting too fast or too slow both carry risk.

3.3 Ontological Dilemmas

These involve a fundamental clash between different kinds of moral obligations — for example, a duty to tell the truth (deontological) vs. a duty to prevent harm (consequentialist).

3.4 Social vs. Personal Dilemmas

A personal dilemma affects mainly you and those close to you. A social dilemma involves collective action — situations where individual self-interest conflicts with the common good (like the classic Prisoner's Dilemma in game theory).

Bullet-point summary:

- Dilemmas can be self-created or externally imposed

- Epistemic dilemmas involve uncertainty about facts, not just values

- Ontological dilemmas pit different moral frameworks against each other

- Social dilemmas reveal tension between personal and collective interests

- Recognizing the type of dilemma helps select the right reasoning tool

4. Major Ethical Frameworks: How Philosophers Approach Moral Dilemmas

Every major ethical tradition offers a method for resolving moral conflicts. None is perfect — each illuminates some aspects while missing others. Understanding them is essential to thinking clearly.

4.1 Consequentialism (Utilitarianism)

Championed by Jeremy Bentham and John Stuart Mill, consequentialism holds that the right action is whichever produces the greatest good for the greatest number. In a dilemma, you calculate outcomes — who is helped, who is harmed, by how much — and choose the option with the best net result.

Real-world application: Public health policy often relies on utilitarian calculus. During the COVID-19 pandemic, governments had to decide whether lockdowns — causing economic harm to millions — were justified by the lives they would save.

4.2 Deontological Ethics (Kantian Ethics)

Immanuel Kant argued that morality is about duty, not outcomes. His "Categorical Imperative" holds that you should act only according to principles you would want universalized — rules that apply to everyone, always. Lying is wrong even to save a life, because a world where everyone lies when convenient collapses into chaos.

Real-world application: Human rights law is fundamentally deontological — certain rights (freedom from torture, right to a fair trial) cannot be violated even if doing so would benefit the majority.

4.3 Virtue Ethics (Aristotelian Ethics)

Aristotle shifted the question from "What should I do?" to "What kind of person should I be?" Virtue ethics asks: what would a person of good character — courageous, just, compassionate, honest — do in this situation? It emphasizes cultivating moral habits over time rather than applying rigid rules.

Real-world application: Medical ethics often invokes virtue — a good doctor isn't just technically correct; they show compassion, honesty, and integrity even in impossible situations.

4.4 Care Ethics

Developed by Carol Gilligan and Nel Noddings, care ethics emphasizes relationships, context, and the particular needs of those involved. Rather than applying universal rules, it asks: who are the people affected, what are their specific vulnerabilities, and how do I respond with empathy and responsibility?

4.5 Contractarianism (Social Contract Theory)

John Rawls' famous "veil of ignorance" thought experiment asks: what rules would you choose if you didn't know your own place in society — your wealth, race, gender, or abilities? Moral choices should be guided by principles that would be fair to everyone, regardless of their position.

Bullet-point summary:

- Consequentialism: choose the action with the best overall outcome

- Deontology: follow moral rules regardless of consequences

- Virtue ethics: ask what a person of good character would do

- Care ethics: center relationships, context, and vulnerability

- Contractarianism: choose principles you'd accept behind a veil of ignorance

- Most real-world dilemmas require drawing from multiple frameworks

5. The Psychology Behind Moral Decision-Making

Moral choices are not made in a vacuum of pure reason. Psychology — and neuroscience — reveals that our brains process ethical decisions in ways that are fast, emotional, and often unconscious.

Jonathan Haidt's Social Intuitionist Model argues that moral judgments are primarily driven by gut feelings (intuitions), with reasoning used afterward to justify what we already feel. In his famous "Moral Dumbfounding" experiments, people condemned actions they found viscerally repulsive — even when they couldn't articulate a logical reason — and then confabulated explanations.

Neuroscientist Antonio Damasio's research on patients with damage to the prefrontal cortex (the brain's moral reasoning center) showed that they could reason logically but made profoundly callous moral decisions — suggesting that emotion is not the enemy of moral reasoning; it is essential to it.

Meanwhile, Joshua Greene's dual-process theory distinguishes between:

- Automatic/emotional processing — fast, intuitive, often deontological ("don't push the man off the bridge!")

- Deliberative/rational processing — slow, calculated, often utilitarian ("sacrificing one to save five is mathematically justified")

Understanding your own psychological tendencies helps you recognize when you might be rationalizing rather than reasoning — a critical skill for anyone facing genuine moral choices.

Bullet-point summary:

- Moral judgments are often driven by gut feelings, not pure logic

- Emotions are integral to good moral reasoning — not obstacles to it

- Post-hoc rationalization ("moral dumbfounding") is common and dangerous

- Dual-process theory: fast emotional responses vs. slow deliberate reasoning

- Self-awareness about your psychological biases is a moral skill

- Groups and crowds can amplify poor moral reasoning through conformity and diffusion of responsibility

6. Famous Moral Dilemmas That Shaped Ethical Thinking

Philosophers have used thought experiments to expose the structure of moral reasoning. These classic dilemmas remain among the most discussed in ethics.

6.1 The Trolley Problem

A runaway trolley is heading toward five people tied to the tracks. You can pull a lever to divert it to a side track — where one person is tied. Do you pull the lever? Most people say yes. But the famous variation — the Footbridge Problem — asks whether you would push a large man off a bridge to stop the trolley and save the five. Most people say no — even though the math is identical. This reveals that how we cause harm matters morally, not just the outcome.

6.2 The Heinz Dilemma

Developed by Lawrence Kohlberg to study moral development: Heinz's wife is dying of a disease. A local druggist has the cure but charges ten times what Heinz can afford. Should Heinz steal the drug? Kohlberg found that people's responses reflected different stages of moral development — from rule-following to principled reasoning about justice and human life.

6.3 The Violinist (Judith Jarvis Thomson)

A famous thought experiment in the abortion debate: you wake up connected to a famous violinist who needs your kidneys for nine months to survive. Do you have a moral obligation to remain connected? Thomson used this to argue that even if a fetus has a right to life, that doesn't automatically mean a woman has an obligation to sustain it.

6.4 Sophie's Choice

From William Styron's novel (later a film): a mother is forced by a Nazi officer to choose which of her two children will be killed and which will survive. The dilemma has become a cultural shorthand for an impossible moral choice — one where any decision destroys something irreplaceable.

Bullet-point summary:

- The Trolley Problem reveals the moral distinction between action and inaction

- The Heinz Dilemma tracks stages of moral development

- Thomson's Violinist challenges intuitions about obligation and bodily autonomy

- Sophie's Choice illustrates genuine moral tragedy — where no choice is "right"

- Thought experiments strip away context to expose our raw moral intuitions

- Real-world dilemmas are messier but follow similar structural patterns

7. Moral Dilemmas in Everyday Life

Most of us will never face a runaway trolley. But moral dilemmas arise constantly — in quieter, more personal forms.

- Honesty vs. kindness: Your friend asks if their business idea is good. It isn't. Do you tell the truth and risk damaging their confidence, or soften it and let them waste time and money?

- Loyalty vs. justice: You discover that a colleague — someone you like and respect — has been padding their expense reports. Do you report them?

- Personal interest vs. duty: You're offered a promotion that requires relocating, just as your aging parent's health is declining. What do you owe your career? What do you owe your family?

- Privacy vs. safety: You read your teenager's messages and discover they're in a dangerous situation. Do you act on information obtained by violating their privacy?

Each of these is a genuine moral conflict. There is no formula that resolves them automatically. But the frameworks above — and the decision-making steps below — give you structured tools for thinking them through.

Bullet-point summary:

- Everyday dilemmas are real moral conflicts, not just "difficult choices"

- Honesty, loyalty, duty, and justice frequently collide in personal life

- The stakes in everyday dilemmas are often relational — people will be hurt

- Avoidance is itself a moral choice (and often a morally costly one)

- Moral courage — choosing the harder right over the easier wrong — is a learnable skill

8. Moral Dilemmas in Professional & Institutional Contexts

Professions create structured moral obligations — codes of ethics, legal duties, fiduciary responsibilities — that can clash with personal conscience or external pressures.

8.1 Medical Ethics

A doctor must decide whether to tell a terminal patient the full truth about their prognosis. The patient's autonomy demands honesty; their wellbeing might be better served by hope. The four principles of biomedical ethics — autonomy, beneficence, non-maleficence, and justice — provide a framework, but they can point in different directions in the same case.

8.2 Legal Ethics

A defense attorney knows their client is guilty. They are legally obligated to provide the best defense possible. Does the professional duty to their client override their intuition about justice? Legal ethics says yes — and explains why: the adversarial system's integrity depends on everyone receiving rigorous defense, regardless of guilt.

8.3 Journalism

A journalist discovers information that is true, important, and newsworthy — but publishing it could endanger a source's life. The public's right to know clashes directly with a duty of care to a specific, vulnerable person.

8.4 Corporate & Business Ethics

Whistleblowing is a classic professional dilemma: an employee discovers illegal or harmful practices. Reporting them may be the right thing — but it risks their livelihood, professional reputation, and relationships. According to the Ethics & Compliance Initiative, nearly 50% of employees who witness misconduct choose not to report it, often citing fear of retaliation.

Bullet-point summary:

- Professions create formal ethical obligations that can clash with conscience

- Medical, legal, journalistic, and corporate ethics each involve distinct dilemmas

- Institutional codes of ethics are guides, not algorithms — judgment is always required

- Whistleblowing is among the hardest professional moral decisions

- Ethical organizations create psychological safety for employees to raise concerns

- Professional moral failures often stem from conformity pressure, not bad character

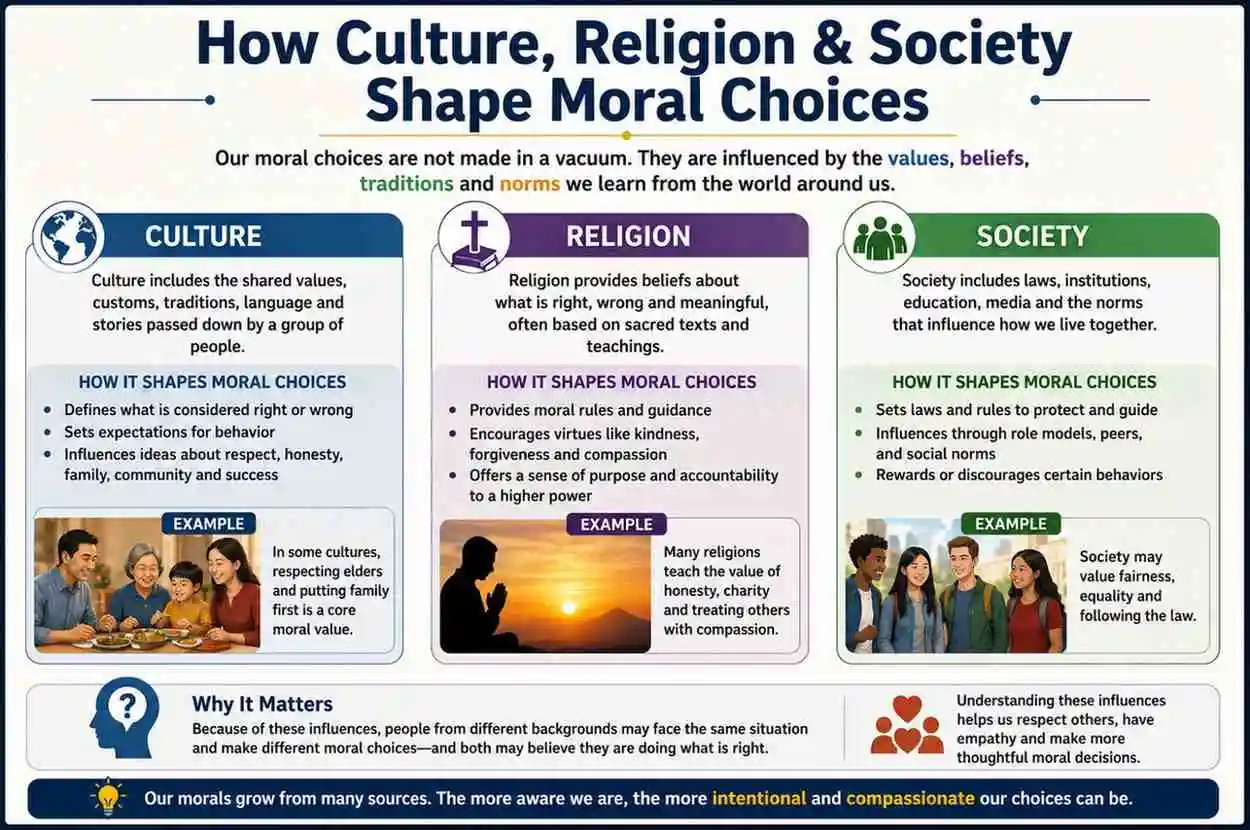

9. How Culture, Religion & Society Shape Moral Choices

Moral reasoning does not happen in a cultural vacuum. What counts as a dilemma — and what counts as the right answer — varies significantly across societies, religions, and historical periods.

Individualist vs. Collectivist cultures: Western cultures tend to prioritize individual rights and autonomy (deontological thinking). East Asian cultures, influenced by Confucianism, often emphasize relational duties, social harmony, and collective welfare — closer to care ethics or virtue ethics. The same dilemma can produce genuinely different "right" answers depending on the cultural framework being applied.

Religious traditions: Most major religions offer ethical frameworks that address dilemmas. Islamic jurisprudence (fiqh) employs a principle of maslaha (public interest) when individual rules conflict. Jewish halacha uses talmudic reasoning to weigh competing obligations. Christian ethics draws on natural law and the "principle of double effect" — the idea that an action with both good and bad consequences can be morally permissible under certain conditions.

Social norms and historical context: What was once considered morally obvious — the divine right of kings, the permissibility of slavery, the exclusion of women from public life — is now considered morally monstrous. This moral evolution is not relativism; it reflects the ongoing refinement of moral understanding through experience, argument, and empathy.

Bullet-point summary:

- Cultural background shapes which moral frameworks feel most natural and "obvious"

- Individualist cultures tend toward rights-based reasoning; collectivist cultures toward relational duties

- Religious traditions provide structured ethical frameworks with long histories of application

- Historical moral progress shows that widely held moral views can be profoundly wrong

- Cross-cultural moral dialogue requires both humility and commitment to core principles

- Universal human rights represent an attempt to identify moral principles across cultural lines

10. Advantages of Confronting Moral Dilemmas

It might be tempting to avoid moral dilemmas — to look away, defer the decision, or pretend the conflict isn't real. But engaging with them honestly offers genuine benefits.

Moral clarity: Wrestling with a genuine dilemma forces you to articulate what you actually value — not what you think you should value. It's a mirror for your deepest commitments.

Better decisions: People who practice ethical reasoning make more consistent, thoughtful decisions over time. The habits of mind built through moral reflection — considering multiple perspectives, questioning assumptions, weighing consequences — transfer to other domains of judgment.

Character development: Aristotle was right: virtue is built through practice. Confronting moral challenges and choosing well — even imperfectly — builds moral character over time.

Institutional integrity: Organizations that normalize open ethical debate create cultures where problems are surfaced early, misconduct is challenged, and trust is maintained.

Bullet-point summary:

- Engaging with dilemmas clarifies what you genuinely value

- Ethical reasoning practice improves decision quality across all domains

- Moral courage — built through difficult choices — compounds over time

- Open ethical culture in organizations reduces misconduct and builds trust

- Avoiding dilemmas doesn't make them go away; it usually makes them worse

- Moral residue (regret) from past choices can be a productive teacher, not just a burden

11. Challenges & Limitations in Resolving Moral Dilemmas

No guide — including this one — can guarantee a "correct" answer to a genuine moral dilemma. Several inherent challenges make resolution difficult.

Moral uncertainty: In most real dilemmas, you don't have complete information. You can't know exactly how your actions will ripple out. Moral reasoning under uncertainty is different from moral reasoning with full information.

Cognitive biases: Confirmation bias, in-group favoritism, loss aversion, and status quo bias all distort moral reasoning. Research by behavioral economist Dan Ariely shows that most people who consider themselves honest will engage in small dishonest acts when the personal cost is low — a gap between moral self-image and moral behavior.

Moral fatigue: Making difficult ethical choices depletes cognitive and emotional resources. Studies in behavioral ethics show that decision-makers who face many moral choices in sequence tend to make worse decisions as the day progresses — a phenomenon sometimes called "ethical fading."

The limits of theory: No single ethical framework perfectly resolves all dilemmas. Utilitarianism can justify monstrous acts if the math works out. Strict deontology can lead to absurd results (Kant argued you should not lie even to a murderer asking where your friend is hiding). Real wisdom requires using frameworks as tools, not dogmas.

Bullet-point summary:

- Moral uncertainty is normal — certainty in complex dilemmas is often a warning sign

- Cognitive biases systematically distort moral reasoning in predictable ways

- Ethical fading means moral judgment gets worse under fatigue and routine

- No single ethical framework is complete or universally applicable

- The gap between moral self-image and moral behavior is real and well-documented

- Acknowledging limitations is itself a moral virtue — it guards against arrogance

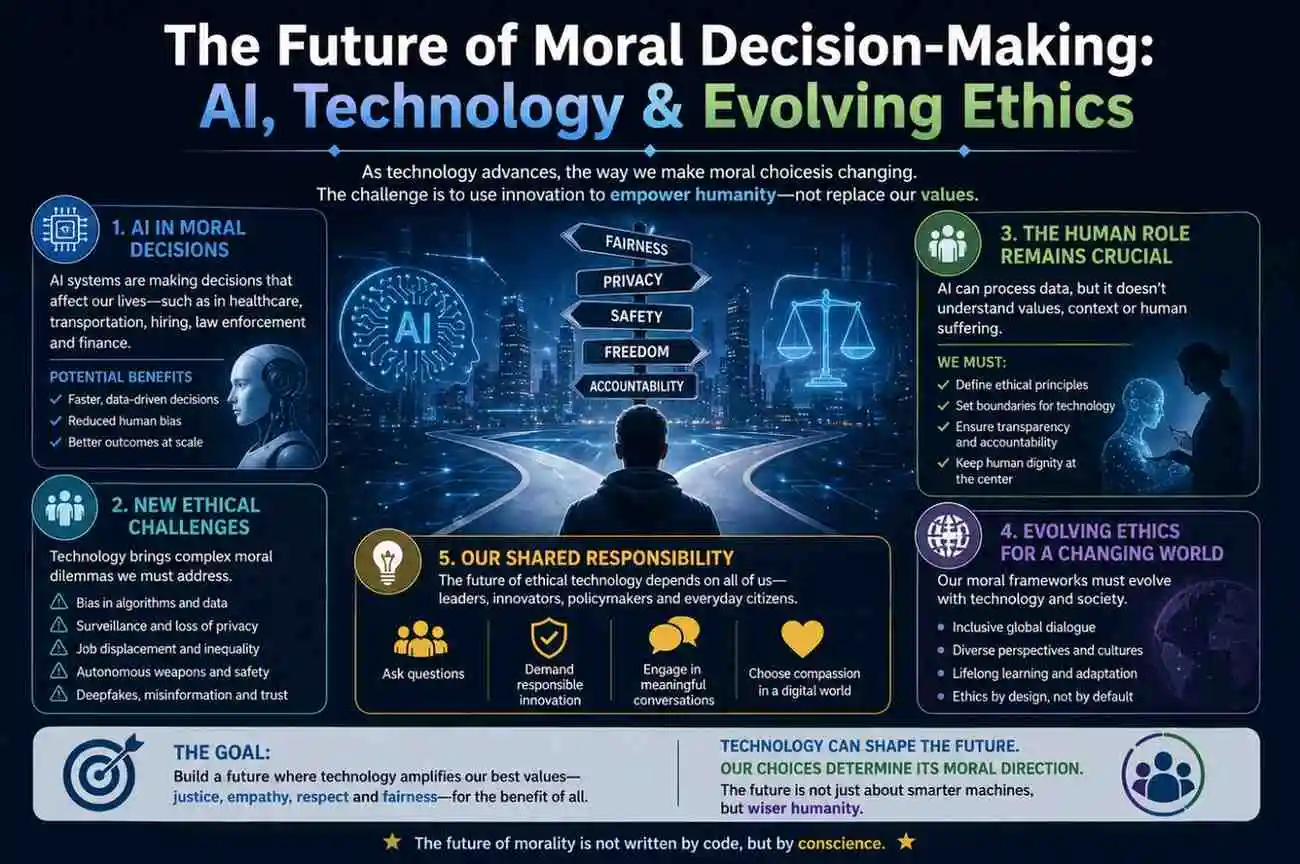

12. The Future of Moral Decision-Making: AI, Technology & Evolving Ethics

As artificial intelligence enters more domains of human life, moral dilemmas are taking new forms — and the stakes are rising.

Algorithmic decision-making: When an AI system denies a loan application, recommends a prison sentence, or triages medical care, who is morally responsible? The programmer? The company? The regulator? The user? This "responsibility gap" is one of the central ethical challenges of our time.

The trolley problem goes digital: Self-driving cars must be programmed with decision rules for unavoidable accidents. If a crash is inevitable, should the car prioritize its passengers, pedestrians, or the greatest number of lives? Researchers at MIT's Moral Machine project collected over 40 million moral decisions from people in 233 countries — and found profound cross-cultural disagreement on who should be sacrificed.

AI-generated moral advice: Increasingly, people turn to AI tools for guidance on difficult decisions. This raises questions about whether AI can be a genuine moral reasoner — or only a sophisticated simulator of reasoning that lacks the emotional, relational, and experiential foundations that make human moral judgment meaningful.

Data privacy dilemmas: Governments and corporations routinely face trade-offs between surveillance (for security or health) and privacy (for autonomy and dignity). These are genuine moral dilemmas, not just policy questions.

Bullet-point summary:

- AI decision-making creates new "responsibility gaps" in moral accountability

- Self-driving vehicle ethics made the trolley problem a literal engineering requirement

- Cross-cultural disagreement on AI moral decisions is empirically documented and significant

- Digital privacy dilemmas are among the most pressing moral challenges of the 2020s

- Future moral frameworks will need to address non-human moral agents

- Technologists, ethicists, policymakers, and citizens all share moral responsibility for AI's trajectory

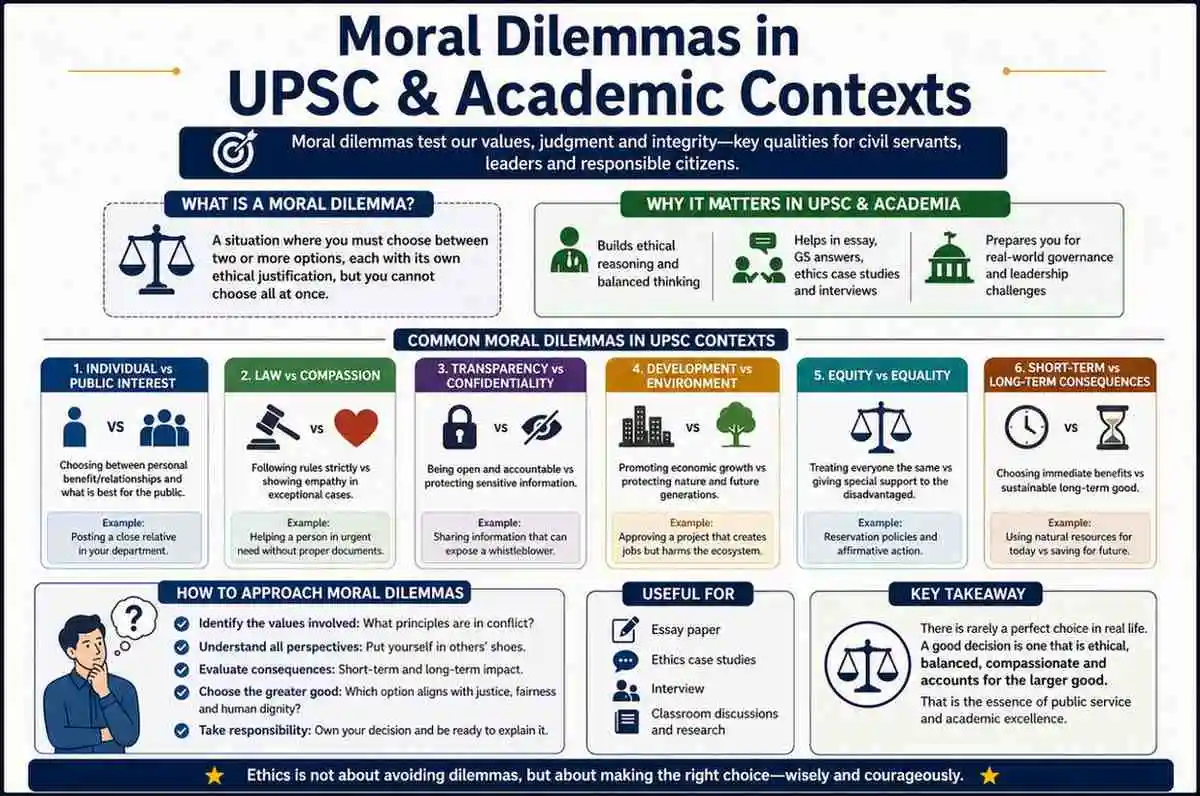

13. Moral Dilemmas in UPSC & Academic Contexts

For students preparing for the UPSC Civil Services Examination, moral dilemmas are a core component of GS Paper IV (Ethics, Integrity & Aptitude) and the Essay Paper. The UPSC expects candidates not just to identify ethical conflicts, but to:

- Apply multiple ethical frameworks (consequentialism, deontology, virtue ethics) to a single case

- Demonstrate awareness of relevant laws, policies, and constitutional values

- Show moral reasoning that is nuanced, not absolutist

- Propose actionable resolutions with acknowledgment of trade-offs

Common UPSC dilemma formats:

- A civil servant discovers corruption by a superior. What do they do?

- A policy that helps many people will displace a vulnerable minority. Is it justified?

- A whistleblower faces retaliation. What are their rights and responsibilities?

In philosophy and psychology courses, moral dilemmas appear as case studies, essay prompts, and research subjects. Kohlberg's moral development stages — from pre-conventional (self-interest) through conventional (rule-following) to post-conventional (principled reasoning) — are a standard framework taught in undergraduate psychology worldwide.

Bullet-point summary:

- UPSC GS Paper IV tests application of ethical frameworks to practical dilemmas

- Answers must show nuance, awareness of trade-offs, and actionable resolution

- Kohlberg's stages of moral development are core curriculum in psychology courses

- Philosophy courses use dilemmas to teach critical thinking and ethical argumentation

- Case-study based learning in law and medicine builds professional moral competence

- Academic moral reasoning differs from everyday reasoning in rigor and explicitness — but both matter

14. How to Develop Your Moral Reasoning Skills: A Practical Guide

Knowing the theory is not enough. Moral reasoning is a skill — and like all skills, it improves with deliberate practice.

Step 1: Identify the moral conflict clearly

Before rushing to a decision, name the values in conflict. "I value honesty, and I value loyalty. Right now, they're pulling in opposite directions." Getting the conflict clear in your mind is half the battle.

Step 2: Gather relevant information

Don't make irreversible moral decisions on incomplete facts. Ask: what do I know, what do I not know, and what could I find out?

Step 3: Apply multiple frameworks

Run the dilemma through at least two ethical frameworks. What does consequentialism recommend? What does a virtue ethics lens suggest? Where do they agree or disagree?

Step 4: Consider all affected parties

Who will be affected by each option? What are their interests, vulnerabilities, and rights? Care ethics asks you to see the people — not just the principles.

Step 5: Consult trusted others

Moral reasoning is not solitary. Talk to people you respect — especially those who might see the situation differently. "Ethics committees" and "ethics hotlines" exist in professional settings for exactly this reason.

Step 6: Make the decision and act

At some point, reasoning must yield to action. The perfect must not become the enemy of the good. Make the best choice you can with the information you have.

Step 7: Reflect afterward

After the decision, reflect: Was it the right call? What did you learn? Moral growth comes from honest post-decision reflection, not just pre-decision analysis.

Bullet-point summary:

- Name the values in conflict before attempting to resolve the dilemma

- Incomplete information is a moral risk — gather what you can

- Apply multiple ethical frameworks; where they agree, confidence increases

- Center the people affected, not just the abstract principles

- Consult trusted others — moral reasoning benefits from dialogue

- Act decisively once you've reasoned carefully — avoidance is also a choice

- Reflect afterward to build moral skill over time

FAQ: Moral Dilemmas — Your Questions Answered

Q1: What is the difference between a moral dilemma and an ethical dilemma?

The terms are often used interchangeably, but a subtle distinction exists. A moral dilemma involves a personal conflict between values you hold — it concerns your inner sense of right and wrong. An ethical dilemma typically refers to conflicts within a professional or institutional framework governed by formal codes of ethics (medical ethics, legal ethics). In practice, most dilemmas involve both dimensions simultaneously.

Q2: Is there always a "right" answer to a moral dilemma?

Not necessarily. Philosophers genuinely disagree on this. Some argue that one option is always objectively better, even if the difference is small. Others — like Bernard Williams — hold that genuine moral dilemmas exist in which any choice leaves a moral remainder (regret, residue) that cannot be entirely eliminated. In practical terms: there is often a better answer, even if not a perfect one, and reasoning carefully increases your chances of finding it.

Q3: Can artificial intelligence solve moral dilemmas better than humans?

No — at least not yet, and arguably not in principle. AI systems can model outcomes, apply rules, and identify inconsistencies in reasoning. But they lack the emotional attunement, relational context, and embodied experience that ground human moral judgment. The MIT Moral Machine project found that even crowd-sourced human responses to AI trolley problems varied dramatically by culture — suggesting no universal algorithm exists. AI can assist moral reasoning; it cannot replace it.

Q4: How are moral dilemmas relevant to UPSC preparation?

Moral dilemmas are central to UPSC GS Paper IV (Ethics, Integrity and Aptitude) and case study questions. The examination expects candidates to identify ethical conflicts, apply frameworks such as utilitarianism, Kantian ethics, and virtue ethics, and propose nuanced, actionable solutions. Candidates who understand both theory and practical application consistently score higher on ethics papers. Familiarity with landmark dilemmas — the Heinz Dilemma, trolley problems, whistleblowing scenarios — provides strong conceptual anchors.

Q5: Is a moral dilemma the same as a logical paradox? No. A logical paradox involves a contradiction within a formal reasoning system — it can often be resolved by identifying a flaw in the logic. A moral dilemma involves a conflict between genuine values — both options have real moral weight, and the conflict cannot be dissolved simply by thinking more clearly. Moral dilemmas are real-world conflicts, not logical puzzles.

Q6: How does moral courage differ from ordinary bravery?

Ordinary bravery confronts physical danger — it is about overcoming fear of harm to oneself. Moral courage confronts social and psychological danger — the fear of disapproval, retaliation, ostracism, or conflict. A whistleblower, a dissenting board member, or a person who challenges a popular but wrong belief all require moral courage. Like physical bravery, moral courage can be developed through practice, mentors, and organizational cultures that reward integrity over conformity.

Q7: A common misconception — is staying neutral in a moral dilemma the "safe" choice?

No. Neutrality is itself a moral choice — and in many situations, an actively harmful one. Edmund Burke's famous observation captures it well: staying silent in the face of wrongdoing is not neutrality; it is complicity. When you witness injustice and choose not to act, you have made a decision with moral consequences. The feeling that inaction is "safe" is a cognitive bias — the status quo bias — not a moral truth.

Conclusion: The Art of Choosing Well

Moral dilemmas are not problems to be solved once and forgotten. They are a permanent feature of human life — wherever values coexist, they will sometimes conflict. The question is not whether you will face them, but whether you will be ready when you do.

The frameworks in this guide — from Aristotle's virtue ethics to Rawls' veil of ignorance, from the psychology of moral intuition to the practical seven-step reasoning process — are not formulas. They are lenses. Used together, they help you see a dilemma from multiple angles, weigh what genuinely matters, and make a choice you can defend — not just to others, but to yourself.

Three takeaways to carry with you: First, name the conflict honestly — clarity about what values are at stake is the foundation of good moral reasoning. Second, no single ethical theory is sufficient — wisdom lies in knowing which lens to apply and when. Third, moral courage is learnable — every time you choose the harder right over the easier wrong, you become more capable of doing it again.

Watch for the growing intersection of technology and ethics — AI, algorithmic decision-making, and digital privacy are reshaping what moral dilemmas look like in the 21st century. The principles, however, remain ancient.

If this guide helped you think more clearly about ethics, share it with someone facing a hard choice. Bookmark it for your UPSC preparation. And explore our related articles on ethical governance, psychological biases in decision-making, and the philosophy of justice. The conversation on how to live well never ends — and it's always worth continuing.